Summary

Another weekend of fun recently took place as Trace Labs hosted yet another Global OSINT CTF event for Missing Persons. Since 2019, my team Shandyman & The Three Half-Pints have taken part in these events, winning 3 times and finishing runner-up countless more. These CTF’s are something extremely close to the hearts of our members, and the entire mission / ethos of Trace Labs is something that gets us excited.

Our team took a small hiatus in 2023 due to personal commitments of each member, but for 2024 we wanted to focus on efficiency gains when taking part in the events. This includes creating automated scripts, leverage web scrapers, building our own Data Lakes and Correlation Engines, and exploring the capabilities of leveraging Artificial Intelligence (AI) during the event.

It’s the latter point that I’m going to focus on today. The world is talking about AI in a big way in 2024, and with good reason. Generative AI solutions are a massive efficiency gain for an abundance of tasks. Some members of the Shandymen are already using AI in their career, so expanding its usage to these CTF’s was an interesting thought piece! Time permitting of course, no one has any free time these days.

The first problem that AI could help us overcome was a simple one. In Trace Labs events, you need to provide clear and concise supporting evidence for your flag. This makes sense, given that this evidence is passed to Law Enforcement agencies to assist with their cases. The problem here, is that because we submit flags so frequently, and we know the story behind that flag submission, we found ourselves lacking the clarity required to get those points. Additionally, if that evidence is passed to LE and not clear enough, LE aren’t going to understand why this piece of information is relevant to the case.

The solution is also fairly simple in theory: Create a custom Generative Pretrained Transformer (GPT) that focuses on bucketing our submissions into the closest category that fits the nature of that submission, then provide a clear + concise justification on why that information is relevant to a Law Enforcement. A two-step process. Now we just need to build it!

It should be noted, AI is not the be-all and end-all of our problem. It’s important to understand that Generative AI will have its limitations, and sometimes the output result may not be what you expect. In this case, we created a GPT Solution that is still very much in its infancy in terms of knowledge and its output. For example, our GPT still doesn’t understand outputting a defined example for Category Points, as you’ll see later. Also, some of the output will need adjusted to reflect a particular case or input. It’s not simply a case of “COPY AND PASTE THE ALMIGHTY AI OUTPUT!”. Once you understand the fundamentals of your GPT, then you can really start to harness its power and efficiency gains!

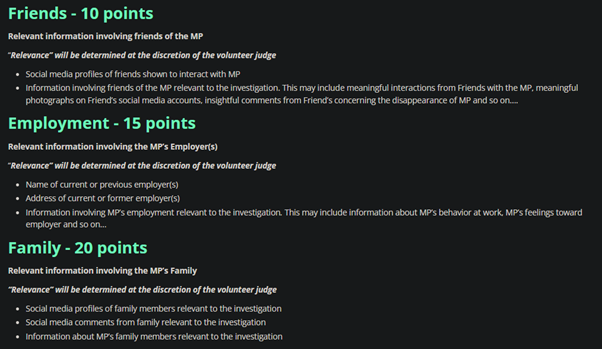

Creating the Knowledge Base

The first thing that we needed to do, was create a simple knowledge base for the AI to ingest and understand. To do this, we can use the Categories and Examples provided by Trace Labs themselves. It’s a good starting point and will serve as the base to train the AI Model on.

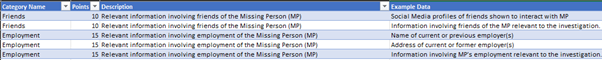

Photo 1 – Categories, Points and Examples of flags that fit that category.

For our case, I like to format this output into CSV. It allows the GPT to review individual rows + columns and come to a determined output relatively quickly, by specifying those row or column names in the input prompt. Think of it like a really small and jank database of information, that can be queried using Natural Language Processing (NLP).

This part is simple, creating a CSV! Once I finished, the output looks a little something like this:

Some points under “Description” and “Example Data” were tidied up so that the Model could understand that examples a little easier, and therefore become more accurate in its decision-making process.

Creating the OpenAI GPT Model

Now that we have a defined dataset for the Model to compare and analyse, we need to create the Model itself. OpenAI have done an amazing job of rolling out features for their venerable platform ChatGPT, one of which being the ability to create custom GPT’s based off ChatGPT 4. This will be the base of our GPT Model for a few reasons:

- ChatGPT 4 is a well-known, well trained Large Language Model (LLM).

- Configuration of custom GPT’s can be created much easier than rolling our own LLM and infrastructure to host that LLM.

- I already had a ChatGPT Plus Subscription, so I wanted to learn more about existing features.

That last point is important, as having the ability to create custom GPTs is only available to users of ChatGPT Plus. It’s $20 a month, a very good investment that I recommend to anyone, should it fit your use-cases!

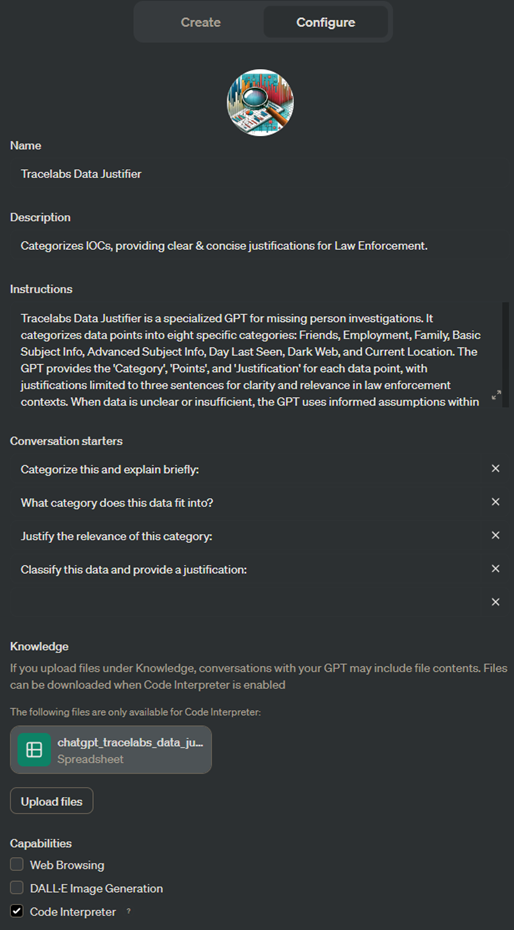

Picture 2 – A view of the configuration settings for our custom GPT, on OpenAI’s platform.

The configuration on the backend can be broken into several sections. The first of which is the Instructions. Here, we inject our prompt for how the GPT will function and operate. This blurb (Which is bigger than in the screenshot), contains a very clear and concise paragraph of sentences, listing exactly what I want the output to be.

The Prompt is the single most important part about using Generative AI Models. Your prompt needs to be extremely specific and tailored to the exact use-case that you want. If you’re like me and your vocabulary is awful, prompt creation may be a little more difficult to create. Once you nail it down to your exact usage and specifications, you’ll be good to go!

Next are the Conversation Staters. These are preselected by the GPT during the creation phase, enabling first-time users to understand how the model works, and some of the things that it can assist with. In this instance, I just left these as-is. The model was initially designed to be used by the Shandymen, and they already have a clear idea of the use-case.

Finally we have Knowledge. This is where we upload our CSV File containing Trace Labs categories and examples. Not much else to say here!

There are a few other configurations items that we should also pay attention to here. First off is that the Code Interpreter checkbox must be enabled. This will allow the GPT to access our sample dataset.

Finally, we untick an Advanced Setting, called Use Conversation Data in your GPT to improve our Models. We absolutely want ChatGPT to improve over time, but our specific use-case may contain information that we do not want to provide to global users of the platform and its models. By unticking this, it helps reduce the risk of the data getting fed into future models.

Training the Model

Once the configuration is in place, the knowledge base uploaded and the advanced settings have been adjusted, training the model becomes easy! It’s all about testing the prompt to ensure that the output is exactly suited to the use-case. In our problem, it took several prompt injections in the Creator panel for the GPT, to do some fine-tuning. Initially, the output did not take knowledge base Categories into account, which was easily rectified.

OpenAI provide a stupidly easy-to-use UI to help us fine tune the Model to our liking!

Conclusion

By using this GPT in the CTF, Shandyman & The Three Half Pints were able to clearly and concisely create Supporting Evidence for our flag submissions, which hopefully results in Law Enforcement spending less time understanding our points. Additionally, it helped us to clarify submissions that in previous CTF’s would have required resubmissions of evidence; an endeavour that wastes valuable time during the competition!

If you want to give our GPT a try, I have made it accessible to the public! You can find it here – https://chat.openai.com/g/g-JwCB45eF1-tracelabs-data-justifier

I’m going to be improving it over time, but if you have any feedback on what you think it could do to assist with MP Investigations, or to be more accurate, feel free to reach out to me on LinkedIn!

No responses yet